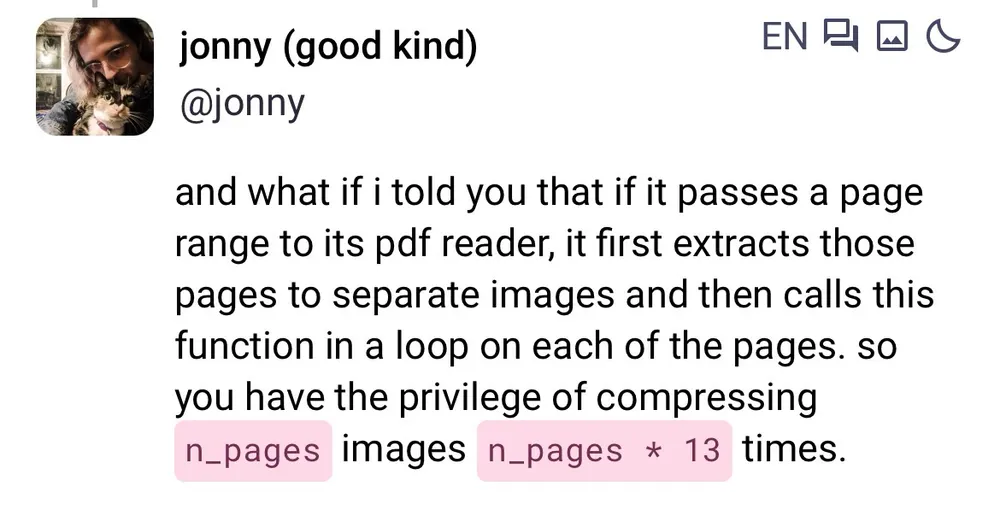

I’m fucking crying dude. Asking “pretty please do not introduce a security vulnerability”.

April 02, 2026 - 20:06 UTC

66

125

3472